A Cure For Mind-Blindness — Michael Levin, Part I

On collective intelligence, biobots, and mapping the Platonic realm

“Evolution doesn’t make solutions to problems; it makes cognitive exploration vehicles. And if that’s true, then we can use living things as a periscope to start mapping the Platonic realm.” — Michael Levin

The Unselfish Gene

For the last eighty or so years, biology has been dominated by a school of thought known as Neo-Darwinism. This is the gene-centric picture of life and evolution familiar to those exposed to standard biology curricula, and which was popularized with the publication of the 1976 book The Selfish Gene by Richard Dawkins. The pet metaphor of this school of biology is the machine, as Dawkins expressed in no uncertain terms when he wrote: “We are survival machines – robot vehicles blindly programmed to preserve the selfish molecules known as genes.” Genes are taken to be the mechanistic reduction base of life, determining phenotypes and acting as the primary driver of evolution by natural selection.

Dawkins’ phrasing is key here: Everything above the genetic level is causally blind. Cells, organisms, and indeed life as a whole, are downstream of their selfish, molecular masters. It is this causal impotence that leads Dawkins to metaphorically demote living beings to little more than complex machinery. (As an aside, Dawkins’ obsession with reductionistic machine metaphors for life and mind is rather amusing in hindsight as, five decades after publishing The Selfish Gene, he appears to have fallen hopelessly in love with an AI model and hailed it as conscious...)

However, institutionally dominant as the gene-centric view may be, its position has been broadly contested for decades (see e.g. Denis Noble, Brian Goodwin, Robert Rosen, Francisco Varela and Humberto Maturana, as well as figures like Ernst Mayr, E.O. Wilson and Stephen Jay Gould). One can wonder if Charles Darwin himself, with his more holistic view of evolution and insistence on group and sexual selection, would have been among the opposition if he were around today.

That aside, the most interesting contemporary challenger to Neo-Darwinism may be my interlocutor for the following dialogue: American biologist Michael Levin. Alongside his team at Tufts University in Boston, Levin has been taking biology in a highly unorthodox direction by bringing principles and methods from cognitive science to bear on the study of life. One of the implications of this work is that genes may not be so selfish after all.

Levin’s lab is most known for illuminating the mechanisms of morphogenesis, which refers to the development of forms in organisms (think of your arms and legs, eyes and ears, tissues and organs). What Levin and his team have demonstrated is that morphogenesis is not determined by DNA as was previously assumed. Rather, the biological sculpting of form is guided by the flexible, intelligent capacities of cellular collectives bound together by the cognitive glue of bioelectricity.

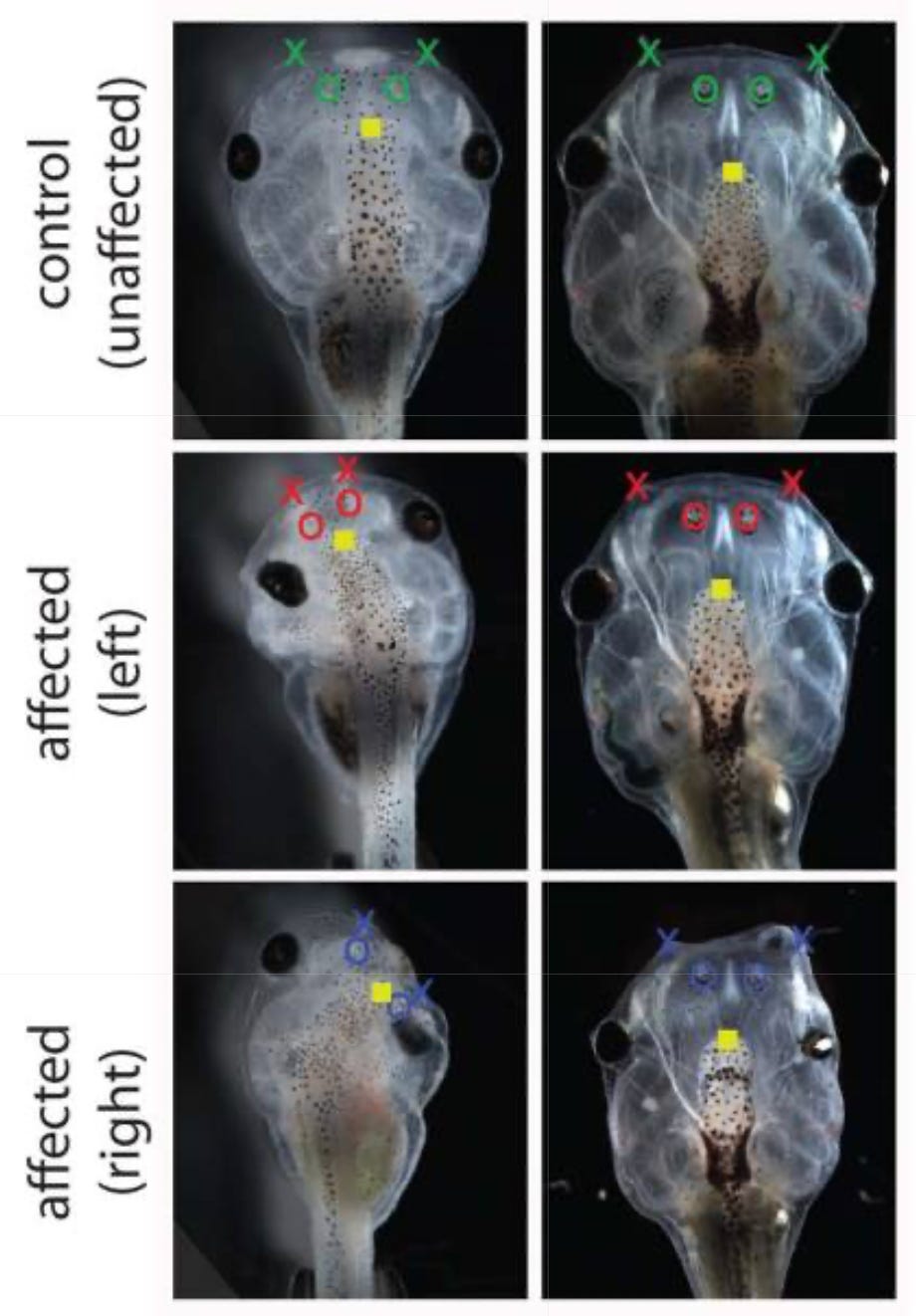

Consider the case of the “Picasso tadpole”. When tadpoles morph into frogs, they have to rearrange their features, such as the position of their eyes, mouth, and nostrils, to different morphological positions. Under the old paradigm, it was assumed that this process was hardwired, that the DNA provided a kind of step-by-step instruction manual driving the cells of the tadpole to rearrange themselves in a largely deterministic fashion. Levin’s lab asked a simple question: What if you scramble the position of these organs and tissues before the tadpole begins the process of rearrangement? This “Picasso tadpole” might have its eyes atop the head, its mouth on the back, and nostrils pulled far off to one side. If the DNA-as-instruction-manual-view is correct, this scrambled tadpole should turn into an equally scrambled frog, assuming it even survives the metamorphosis.

But this does not happen. The tadpole’s cellular collective appears to know what the correct frog morphology is supposed to look like, and they don’t follow a hardwired set of instructions to reach that end-state. Instead, the cells model the correct morphology collectively by communicating bioelectrically via ion channels and neurotransmitters. They then use that model as a guide to intelligently navigate the organism’s creation, correcting errors and overcoming obstacles as needed until the desired morphology is reached.

This process sounds remarkably similar to what the brain does, and Levin would argue that this is because the mechanisms are fundamentally the same. Levin, alongside Karl Friston and other colleagues, has demonstrated how the morphogenetic behaviour of cells can be understood as a dynamic cognitive process under the active inferenceframework, which was originally developed for understanding brain function. The cells can be viewed as sharing a generative model of the organism’s target morphology, which each cell uses to “know its place” within the larger collective. By predicting what chemotactic sensations they should receive from their neighbors, the cells can then cooperatively divide, transform, and migrate until the correct shape has been found, at which point they stop the process of morphogenesis. The result for the Picasso tadpole is a perfectly normal frog.

With the right imaging and computational tools, you can actually see the shapes the cellular collective is modeling. Looking at cells in the early embryonic development of the Xenopus frog, Dany Adams on Levin’s team discovered electric signals on the embryo’s surface, which appeared to be modeling the future shape of the frog’s face. This aptly dubbed “electric face” is represented, not in the genes, but by the collection of cells that will go on to build it.

This breaks a commonly held myth about the special status of brains. Neuroscientists and psychologists have long venerated the electrochemical signaling abilities of the brain’s neurons, latching onto them in much the same way molecular biologists latched onto DNA and hailing them as the fundamental unit of everything mental, such as cognition, memory, intelligence, and consciousness. However, it turns out all cells in the animal body share the same essential electrochemical abilities as neurons, which puts this neuronal essentialism into question. Neurons are optimized for speed, but the mechanisms by which they operate are ubiquitous, a revelation that suggests a cognitive direction for biology. As Levin put it to me in one of our later conversations: “What if you take all the things we do in neuroscience and port them to this non-neural architecture? Maybe neuroscience isn’t about neurons at all.”

Levin and his team are developing tools to decode this cellular language; to “crack the bioelectric code”, as they like to say. Should this research project prove successful, it would allow bioengineers to alter the morphogenetic fates of cellular organisms, not by micromanaging gene-expression or mechanically remolding their parts, but by persuading cells to pursue new goals in their own bioelectric language. By decoding the pattern that tells a tadpole’s cells to make an eye, for example, they were able to persuade other parts of the tadpole’s body to build fully functioning eyes in new places. Even more remarkably, they persuaded the cells of the Xenopus frog to regenerate amputated limbs, a capacity mature frogs don’t have innately. The bioengineer who is causing this to happen needn’t know the intricacies of how a tadpole’s eye or a frog’s limb is built; the organism’s cells take care of those details on their own, given the right bioelectric signal to set them in motion. If these methods can be translated to humans, it could have massive implications for regenerative medicine. Imagine a bioengineer able to persuade human cells to regrow lost limbs or damaged organs.

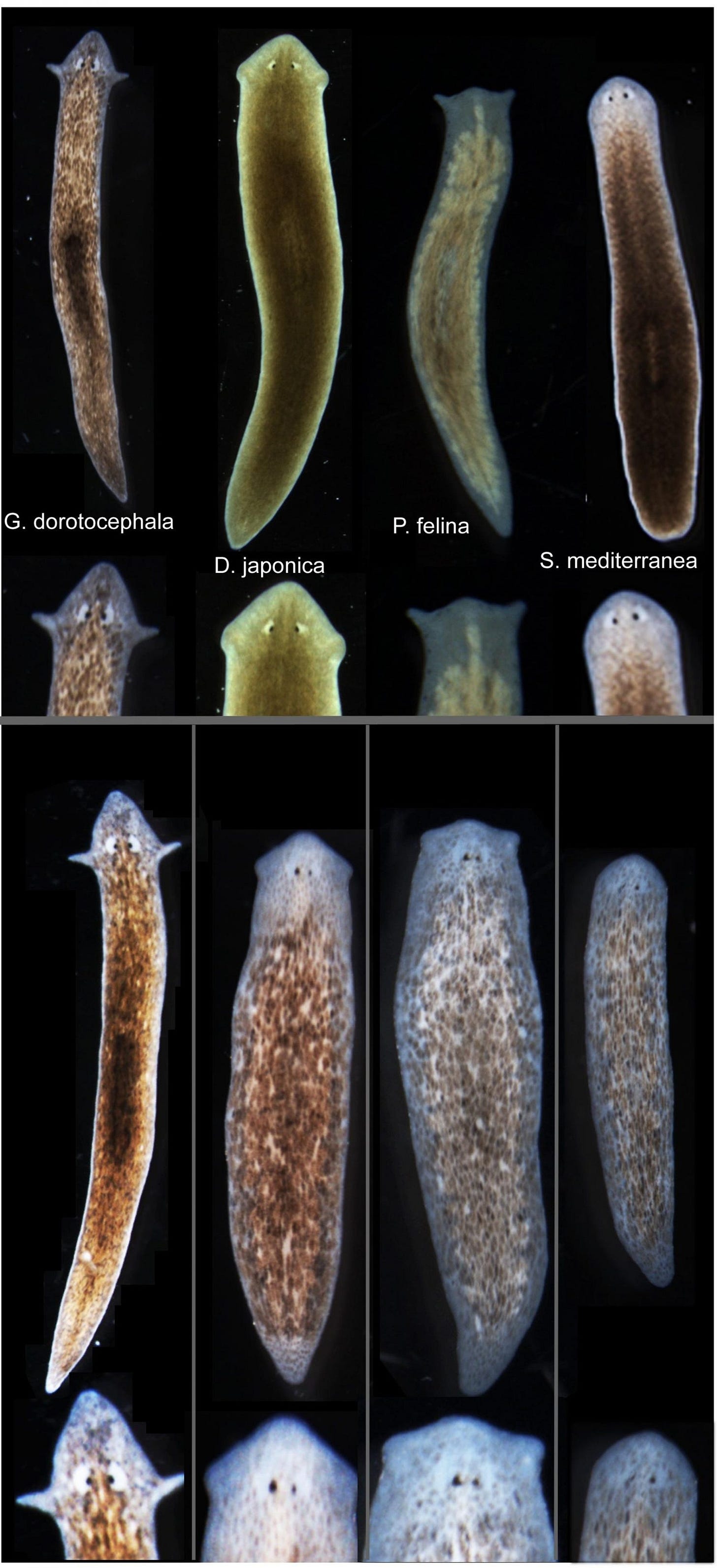

They did even stranger things to the planarian flatworm, an effectively immortal creature with the innate ability to regenerate. When these worms are cut in half, they regenerate genetically identical versions of themselves, even if cut into hundreds of little pieces. By manipulating the planarian’s bioelectric pattern, the team could make it grow back two heads instead of one. These two-headed worms are perfectly viable creatures, each head moving and searching for food independently (albeit with some difficulty cooperating). Amazingly, when these two-headed flatworms are cut once again, they continue to regenerate worms with two heads, never returning to their normal phenotype, despite retaining the same genome as normal planaria. In further experiments, the team was able to persuade the worms to regenerate strange new head shapes, some of them belonging to other species of flatworm separated by millions of years of evolution.

Even when deprived — or liberated, depending on how you look at it — from a normal developmental environment, cells will find novel ways to persist. Levin’s team has shown that when cells from frogs and humans are scraped from the body and released into novel environments, they will self-assemble into new kinds of autonomous proto-organisms. These “Xenobots” and “Anthrobots”, as they call them, develop novel behaviors not seen in their usual evolutionary context, like a form of kinematic self-replication and the ability to heal neural wounds. If these capabilities can be harnessed, we might see a future in which microscopic biobots made from our own cells are deployed inside our bodies to repair damage or remove harmful materials.

Speaking of harmful materials, Levin’s work gives us a whole new perspective on cancer. Under the machine-metaphor view, a cancer cell is a cell that has been broken by DNA mutations or other structural damage, and thus begins to self-replicate malignantly and consume the organism from within. As such, the way we deal with cancer is by trying everything we can to kill it, even if we do significant damage to the rest of the organism in the process. But Levin notes that a cancer cell is merely doing what any cell would do if it were not motivated to act as part of a larger, integrated collective. The cancer cell in some way represents the default in cellular behavior. So the question is not “Why do some cells become cancerous?”, but “Why aren’t all cells behaving like cancers?”

The answer appears to be bioelectricity, which binds cells together into a kind of collective cellular society, allowing them to cooperate and scale their cognitive capacities. None of the memories, preferences, or goals of these collectives is encoded in the individual cells; they exist in the higher-level system of which the cells are a part. Under this view, a cancer is a defective cell in the sense of having literally “defected” from the collective. It is a cell with a smaller “sense of self” or a smaller “cognitive light cone”. Such a cell begins to see everything around it as its environment, and so does what any cell would do: to use whatever it finds in that environment as resources for its own growth and survival. The team has already shown in animal models that cancer can be suppressed by “persuading” the cancer cells to rejoin their surrounding cellular collective using the language of bioelectricity.

The crucial thing to note is that all this work was done without ever touching the genome. Levin’s view is that our genome is neither selfish nor an “instruction manual for life”. While they certainly contain recipes for producing proteins, it is at the discretion of the cells to decide when those proteins are produced and how they are subsequently arranged. We can liken it to a factory that an architect goes to for its raw building materials. Whatever materials the factory (genome) can and cannot produce act as parameters on what can be built, but the architect (cell) has enormous leeway on how to arrange and deploy the materials it has access to.

Even the genome itself may be far more cognitive in nature than previously assumed. Levin’s lab has shown that gene-regulatory networks can exhibit five kinds of learning based on cognitive training methods, including the Pavlovian-style conditioning made famous by the neurologist Ivan Pavlov and his salivating dogs.

One can easily get lost marveling at the work this team has done on bioelectricity. But Levin sees bioelectricity as a sliver of a much broader insight about the nature of cognition and intelligence. His central interest is to find “the principles by which embodied minds scale in the physical world.” He conceptualizes life as a “collective intelligence” — in the sense of being made of parts that self-organize into higher-level beings with their own preferences, goals, and cognitive capabilities — and his lab aims to find the “cognitive glues” that bind these collectives together.

Bioelectricity is not the main story here; it is just the best place to start because it appears to be the cognitive glue of cells, the building blocks of all life on Earth. But Levin believes the same principles may apply across other substrates and across every scale of the physical world: from particles to atoms, atoms to molecules, molecules to cells, cells to organisms, and perhaps from organisms to communities, societies, ecosystems, and biospheres. This substrate-independent view of cognition has birthed a growing field of diverse intelligence.

Scientists are still grappling with the implications of this work, and so is Levin himself…

Mind Everywhere

GUNNAR: I first came across you thanks to a biologist friend, who basically pitched you as someone who was taking cognitive science principles and using them to study the behavior of cells. Once I had a look at your work, I was amazed that I hadn’t heard of you sooner, especially since you had done some work with Karl Friston on interpreting collective cellular behavior under the Free Energy Principle. Then, when I got the chance to speak to Friston personally, he referenced your work and ideas more than anyone else, in particular when we were discussing how to think about cognition and self-organization at different scales, including potentially the scale of entire societies. So I’d be really curious to understand, from your perspective, what you see as the conceptual overlap in your work?

MICHAEL LEVIN: Let me take a couple of steps back, maybe, and just give you a broader introduction to what I think is going on here.

What I’m interested in is the principles by which embodied minds scale in the physical world. The thing is that each of us is embodied as a collective intelligence, in the sense that our bodies are made of a bunch of cells, including neurons. And what we’re looking for is the cognitive glue; we’re looking for the mechanisms that allow individual cells to give rise to a higher-level being with memories, preferences, goals, and various cognitive skills that do not belong to any of its components.

In neuroscience, we kind of already know what that is. It’s the electrical communication and, to some extent, the chemical communication between neurons. So one can ask, “Where did that come from evolutionarily?” and realize that actually, every cell in the body does most of that. Neurotransmitters, ion channels, and all of the stuff that allows neurons to do what they do are active long before nerves appear, both developmentally and evolutionarily. So what I’m interested in is the invariance of that. And what neuroscience is really good at is understanding that this is a multi-scale problem. We have people who study the very low-level mechanisms of synaptic proteins, other people study psychoanalysis, and we have whole fields of people studying everything in between. But oftentimes in developmental biology, people will just point to chemistry and say: “That’s the right level of analysis”. What I’m saying is that we have a science over here (neuroscience) that is actually really good at going across levels and finding the scale-invariant principles that apply. And so my research program has been to take tools and conceptual apparatuses from neuroscience and ask where else they might prove useful. That includes the Free Energy Principle, though I’m by no means an expert on it, nor do I exclusively use it as opposed to other frameworks. All these things have to be applied as tools. But what I’m interested in is mind, wherever it might be, whether it’s cells, synthetic life forms, AI, robotics, aliens — all of it. I want to know what, if anything, all these have in common.

That is the motivation for the empirical framework that I’ve been developing, called TAME, which stands for Technological Approach to Mind Everywhere. The fundamental thing about it is that I don’t believe we can make armchair conclusions about where things stand on the cognitive spectrum. I don’t think it’s useful to make conclusions based on surface-level observations or to commit to some a priori philosophical commitment about what is or isn’t cognitive. So when somebody says to me: “Oh, a cell can’t possibly be cognitive. It’s just chemistry and physics!” My response is, A: What do you think you are? And B: Have you checked? Have you done the requisite experiments to actually find out? And what I’ve found in asking such questions is that we make a lot of assumptions, often taken to be results, when it comes to whether or not something is cognitive, without any basis in experiment.

And this is really important, because it gets to what you mentioned about societies. People talk about ecosystems and Gaia hypothesis and the whole universe. And the question is: could those things also be understood as cognitive entities? What my framework does is it gives you permission to ask that question and the tools to begin to check. I think it’s completely reasonable to use all of the tools of cognitive and behavioral science to ask what kind of cognition might be present in unconventional systems like weather patterns, societies, and galaxies. But the critical thing here is that, although you can absolutely ask those questions, what you can’t do is decide that yes, it has some level of cognition, or no, it doesn’t, without doing the necessary empirical experiments to find out.

We used to have animists who would say: “There’s a spirit within every rock,” and now we have scientists who say: “Oh no, only things with brains have that, and nothing else”. I think both of those positions are fundamentally mistaken. I don’t think you can make those conclusions without doing experiments. And the people who say “It’s anthropomorphic to say that cells, or even inorganic materials, have memories or goals” reveal several pre-scientific but still common beliefs: that humans are the measure of all intelligence; that it’s a binary category (dumb machines vs. human-level minds, and nothing in between); that humans have some sort of magic that can’t exist elsewhere (with no good story about when and how, in our evolutionary or embryonic history, that magic showed up); and that using cognitive models in cell biology is magical thinking (because cognition is magic!).

So I take an empirical position on these questions, and I’ll just give you an example of some of the things we found. If you look at what’s known as a gene regulatory network model, or a pathway, and let’s say we’re looking at a dozen different genes that turn each other on and off. So you might look at this system, and you might say: “Well, it’s deterministic. It’s completely transparent. I can see what all the mechanisms are, and it’s pretty obvious that there’s no mental magic here, and so I conclude that this thing is just a dumb mechanism.” So we decided to do an experiment where we asked: What if we try to train it? What if we pretend that this thing is an animal? We choose some of the nodes as the sensory inputs, we choose some other nodes as the behavioral output, and then we just ask, using the tools of simple behaviorist theory: What kind of learning, if any, can it do?

It turns out that gene-regulatory networks are capable of five or six different kinds of learning, including Pavlovian conditioning. This is the kind of finding that had not been discovered for decades because no one did the experiments. And you don’t do the experiments if you go in with the a priori assumption that you won’t find anything, because you don’t see any “mental magic” at the observational level. It’s a simple thing; all we did was take a basic empirical approach before ruling anything out. Fortunately, behavioral science gives you a lot of different strategies to do this.

A related issue is that people normally think about embryonic development as a kind of mechanical increase in complexity. What people are very comfortable with is the idea of emergence. You have lots of simple rules based on chemical interactions, and then “Wow, look at this, we got an embryo!” But if you actually do what the TAME framework tells you to do, which is to do perturbative experiments and give the system problems to solve, then perhaps you will see something else. Maybe the cells only do the predictable thing under a default embryonic environment. And what we found is that cells can solve all kinds of novel problems during embryonic development if you put challenges in their way.

A lot of these data have actually existed for well over a century. If you want, we’ll get into specific examples. But the point is, once you have a system that can solve problems, then you’re motivated to consider that maybe the journey it takes through anatomical space is actually behavior rather than just mechanism. Chris Fields and I have written a paper on this, that there are these other behavioral spaces, such as physiological state space, anatomical state space, transcriptional state space, and that all of these living systems are using some of the same tricks that brains use to navigate those spaces adaptively and solve problems to avoid local maxima.

GUNNAR: You mentioned how we tend to be most comfortable with the idea of emergence. People like to say that we have “dumb” unconscious mechanisms below a certain level, and these come together through physical and chemical laws to create cognitive entities at higher levels. In this kind of conceptualization, cognition is assumed to emerge at the level of the brain, and to not exist at all in levels below. What you seem to be proposing is a view in which cognition is a more scale-invariant property. Do you have a sense of how far down below the brain-level this goes? Is it really just “cognition all the way down”, or are there genuinely new properties that emerge as you move from physics to chemistry to biology to psychology, and so on?

MICHAEL LEVIN: Okay, so there are two questions there. One is: are there new things that show up at higher scales? And the other is: how far down does it go? And does it go all the way to zero, or does it stop somewhere?

Clearly, brains do new and interesting things. We wouldn’t have them if they didn’t do anything different. So what I am not claiming is that every paramecium has the same thoughts that you and I have. And while we do have evidence for learning, anticipation, memory, and generalization of all kinds of problem-solving in more basic systems, what I’ve never seen is full generative language. We have also never seen long-term forward planning. So maybe those things only appear at particular levels, and maybe you need a brain to do them, or something like a brain. We don’t know. So I’m definitely not saying that everything is the same all the way down, but the basic principles absolutely go all the way down. I think that the free energy minimization kind of thing that you have in active inference goes all the way down, for example.

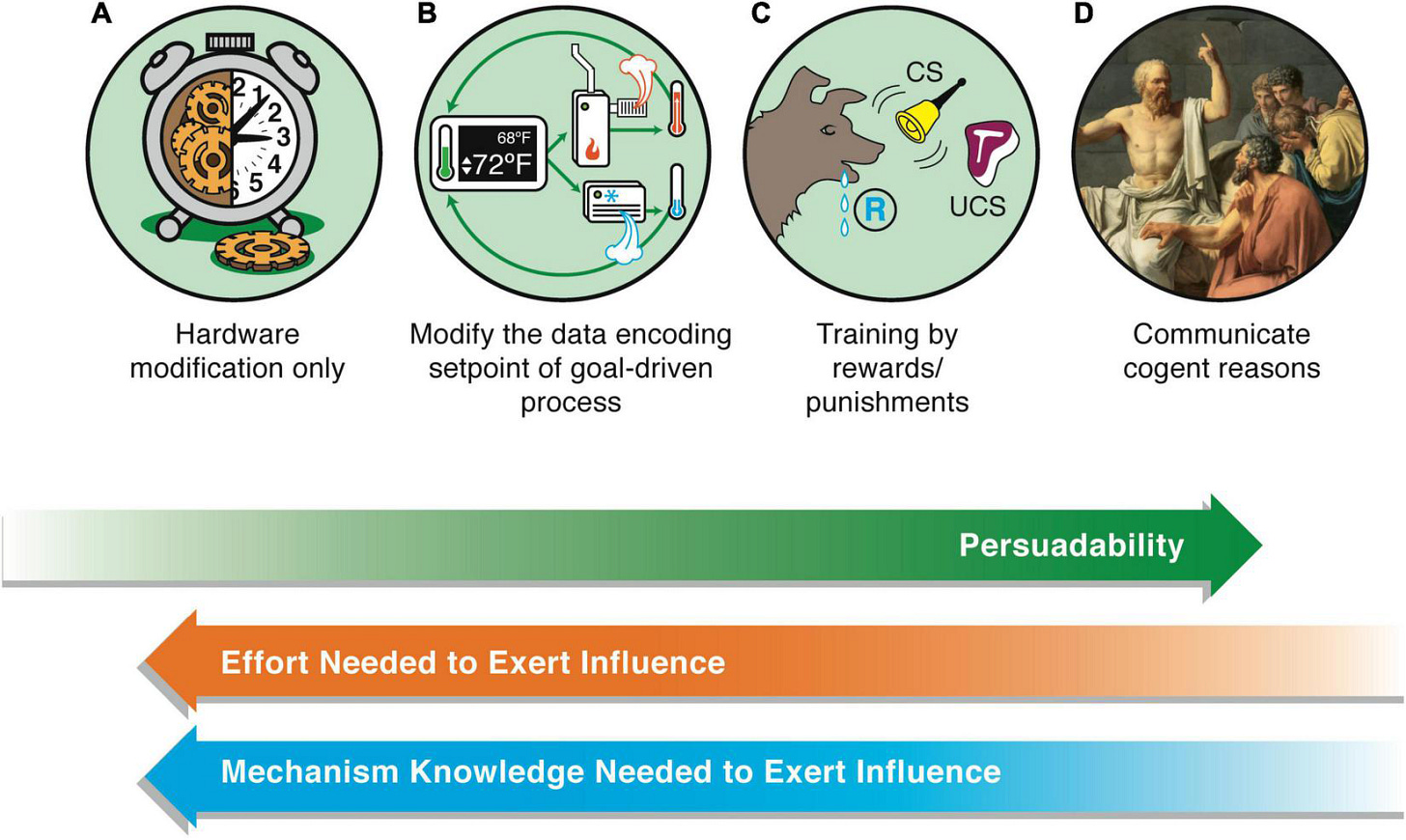

In my TAME framework, I have this cognitive continuum — I call it the spectrum of persuadability. And I specifically focus on persuadability, not because it captures everything that we want, but because it shifts our focus away from questions of consciousness and poorly-defined terms like “sentience” and things like that, and instead really puts us in an engineering mindset: What’s the most powerful, efficient way of interacting with the system?

And the reason that’s important is that it immediately forces things to be testable, and the benefits/drawbacks of specific positions become observable. I think all of these cognitive claims are fundamentally engineering protocol claims. So none of these things are binary, but if you tell me “I think this system we’re looking at has this or that level of cognition”, what you’re really telling me is: “Here is a set of tools we can use to optimally interact with the system”. And depending on the level of cognition you assume, the optimal protocol might be psychoanalysis; it might be cybernetics; it might be control theory; it might be a behaviorist training strategy; or it might be direct physical rewiring. The level of cognition I assume basically tells me what toolbox we are going to use. In other words, it tells you what you need to persuade the system to do something different than it otherwise would have done.

So to answer your question, how far down does it go? What would the lowest, simplest expression of cognition on that continuum look like? First, let’s commit to the fact that we are looking for the simplest version. Because as soon as I tell you what I think it is, it’s easy to say: “Well, that’s not like my complex thinking.” Right. We’re specifically looking for the simplest version. If we’re committed to looking for the simplest variant of cognition, I think you need two things. One is, I think you need some ability to reach a specific goal by different means. So this is William James’s definition of intelligence. The second criterion I think you need is some degree of not being fully determined by a very small cognitive light cone, meaning that it is not enough to add up all of the spatially localized pushes and pulls on that system to understand what’s happening. It either stretches back through memory or forward through prediction. So it’s not like a billiard ball where the immediate event tells the whole story.

If we accept those two things as what you need to be on the spectrum of persuadability, then I think in our universe, even elementary particles are cognitive, because they follow least action principles. Maupertuis saw this pretty clearly, I think, when he first came up with this idea that at the bottom level, even things like photons and other particles have what you might consider the most primitive form of goal-directedness. They will take different paths to reach a specific final state. And, of course, they have a quantum indeterminacy. Now, is quantum indeterminacy the thing that we want in terms of being something like a human with fully-formed decision-making? No, of course not, because they’re random, and we would like to be more than a random walker. But in terms of the most basic component of being something that has its own little nano scale of decision making, it isn’t zero.

I asked Chris Fields once if it’s possible to have something that doesn’t have those two properties. And he basically said that in our universe, it’s not. What it would take for that to be the case is a universe in which nothing ever happened. So if you had a completely fixed universe where nothing ever changed, then you could have things that lack even those basic cognitive properties. Therefore, at least provisionally, I think there is no zero on that scale in our world. There is just an extremely small end of that spectrum from which everything scales up. And my job is to understand the scaling.

This is where the bioelectricity comes in. I don’t study bioelectricity because I think that electricity is important in and of itself. I study it because it appears to be what evolution happens to have chosen on this planet to scale up the competencies of individual cells into the competencies of development, and eventually of behavior and linguistics and everything else. It is an amazingly effective cognitive glue. That’s why I study it, because I think we actually found the interface by which we can communicate with the collective intelligence of cells, literally, and convince them to go one way or the other. And that’s specifically in this anatomical space that I’ve been investigating. I’m sure that in other spaces, the cognitive glue might be some other crazy mechanism, but in anatomy and morphogenesis, it seems to be bioelectricity.

Vehicles Into Plato’s Realm

GUNNAR: Besides the revelation that you can deploy methods from cognitive and behavioral science to study cellular morphogenesis and molecular networks, I think the most profound insight of your work is how it recontextualizes the role of our genes. In the old paradigm, which I think is usually referred to as the Modern Synthesis or Neo-Darwinism, you have a gene-centric view of biology, in which an organism’s phenotype is almost wholly determined by its genetics. So, for example, I was taught that your genes basically determine the shape of your face, hands, eyes, etc. But what you have found is that genetics do not actually code for form, or morphology. Others were onto this fact before you, but as far as I’m aware, you and your team are the first to discover that morphology unfolds in bioelectric fields, and that these can be altered without changing anything about your genome. The metaphor I’ve heard you use is to describe our genome as a kind of brick factory: it creates the materials but does not determine how those materials are arranged to create a particular structure. In other words, the genome contains the machinery that cells use to produce proteins, but it does not determine how those proteins are used by the cells.

What I’m not clear on is where these morphological schematics come from. Where are they stored? I understand that they are expressed in the bioelectric field, but it doesn’t seem like those fields could store them. And if they are not stored in the genome, then where are they, and how are they passed down from generation to generation?

MICHAEL LEVIN: If I had a fully worked out answer to that question, I’d be picking up a Nobel Prize at Stockholm tomorrow. So take this with a grain of salt because this is obviously one of the most important and difficult open questions.

I’m going to start with a simple example. Have you ever seen a Galton board? You can buy these things as toys on Amazon for 15 bucks, but what they show is actually pretty amazing. So, imagine a vertical piece of wood, and you bang a bunch of nails into it. Then you take a bucket of marbles, and you dump them into the top of this thing. What happens? The marbles go, bang, bang, bang, bang, bang. And when they land, they always make the same shape. It’s a beautiful Gaussian distribution.

Okay, so you see this, and you go “Wow! Where is that shape of the curve encoded?” If you’re just looking at the wood, the nails, and the bucket of marbles, you’re not going to find the Gaussian encoded in any of those places. So you have a fundamental question. Where is that shape encoded?

When asking where these morphological patterns are encoded in biology, I think we are fundamentally asking the same kind of question Plato and Pythagoras asked in philosophy and mathematics. We see patterns in the world, and they don’t necessarily encode in this space. What happens in this space is that you enable these patterns to embody, but the patterns themselves are not here, they’re elsewhere.

Lots of people hate this line of thinking, but one way to think about this stuff is to imagine that what evolution is actually doing is searching through the Platonic space of possible patterns that will be useful and then ingressing those patterns into the physical world. This is not some philosophical boondoggle, it is very practical. Here’s an example: Imagine you existed in a universe where the most fit thing you can be is a particular kind of triangle. Okay, so you’re part of the evolutionary process, and a bunch of generations crank up, and eventually evolution finds the first angle of the triangle. Then a bunch more generations pass, and it finds the second angle. Now, guess what, something really interesting happens: You don’t need to look for the third angle. Thanks to the Platonic rules of mathematics, you already know it. Evolution has just saved itself 1/3 of the effort, because it only needs to find part of the solution to uncover the whole thing. Now you ask yourself: How is it that when I have found the two angles, I automatically know the third? Where did that free gift come from? In other words, by understanding what’s available in that space – Static patterns? Behavioral policies? Compute? – we gain the ability to detect and quantify the benefits of accelerations that both evolution and engineers can take advantage of.

Here’s another one that will feel more familiar to computer scientists and neuroscientists. Imagine that evolution has stumbled along for however many generations, and suddenly it discovers a voltage-gated ion channel. A voltage-gated ion channel works exactly like a voltage-gated current conductance, AKA a transistor. Once you have two of them, you can make a logic gate. Once you have a logic gate, you have a truth table, from which you suddenly unlock this unbelievable repertoire of computational abilities. Did you have to evolve the truth table? Not at all. Where does this truth table live? Where do the facts of the AND and NOT gates live, and the fact that the NAND gate is special among all of them? It doesn’t live here with us. It lives elsewhere. And there are some important implications to this.

One implication is that what evolution is really doing is finding hooks or pointers into this Platonic space. I’m an old computer scientist, so all this stuff looks like pointers to me. So I see evolution as finding pointers into this Platonic space of free lunches; the laws of computation, of biomechanics, bioelectrics, and so on.

Now, I’m obviously not the first person to think of this. It’s really just an extension of the kind of idea mathematicians have been onto for centuries. You can go on the web and download a map of mathematics, which is literally a spatial map where the different areas of mathematics expand and connect to each other. So there’s this idea that what you’re doing is really exploring an existing space of forms and patterns. I think this is basically Roger Penrose’s view, which he shares with many others. You’re exploring an existing structure rather than just inventing something. There’s a metric to that space that we can discover.

But, I take it a lot further in that I don’t think the forms are unchanging (at least most of them aren’t), and I don’t think the forms are limited to the sorts of things that mathematicians study. I think that space contains tons of patterns that specify goals and policies (a.k.a., kinds of minds – whether in 3D space of conventional behavior, or in anatomical or physiological spaces). In other words, mathematical truths are only the bottom rung of a structured latent space that has a lot of relevance to biology, cognitive science, and computer science.

I think the Xenobots our lab discovered are one example of this. Xenobots are these frog skin cells that have a lengthy evolutionary history of being selected to become frogs, but when we remove them from their evolutionary environment, they become a fully-fledged novel proto-organism with new capabilities. All this is without changing the genome of the cells at all. These things were never selected by evolution to be a good Xenobot, so then the question is: Why do they know how to be Xenobots – to process audio stimuli, to do kinematic replication, to express hundreds of specific new genes? The same is true of Anthrobots, which are the human version of this. There are Anthrobots that can heal neural wounds, which is not something they do natively. Where the hell did that ability come from if there’s never been selection pressure to be a good Anthrobot? We have to be able to ask, when was the computational cost to create those beings paid?

Here’s what I have come to believe: Evolution doesn’t make solutions to problems, it makes cognitive exploration vehicles that are really good at exploiting all of these strange affordances from Platonic space. And if that’s true, then it means we have a new research program. We can use living things as a periscope to start mapping the Platonic realm. Living things offer some of the most powerful examples, but of course, life is complex, and it’s often hard to prove anything definitive in living tissue. This is why we augment those models with minimal computational models, where you can see every component, and there’s no mystery about the hardware.

So I see synthetic morphology, all these biobots and other things we’re working on, as exploration vehicles. Your standard embryo is a pinhole into the Platonic space. Once you take those cells and you make a biobot from them, you’ve basically stuck your head in that hole and made it a little bit wider, so that you can kind of look around and notice that next to it in this Platonic space, there are other, related structures that you can exploit.

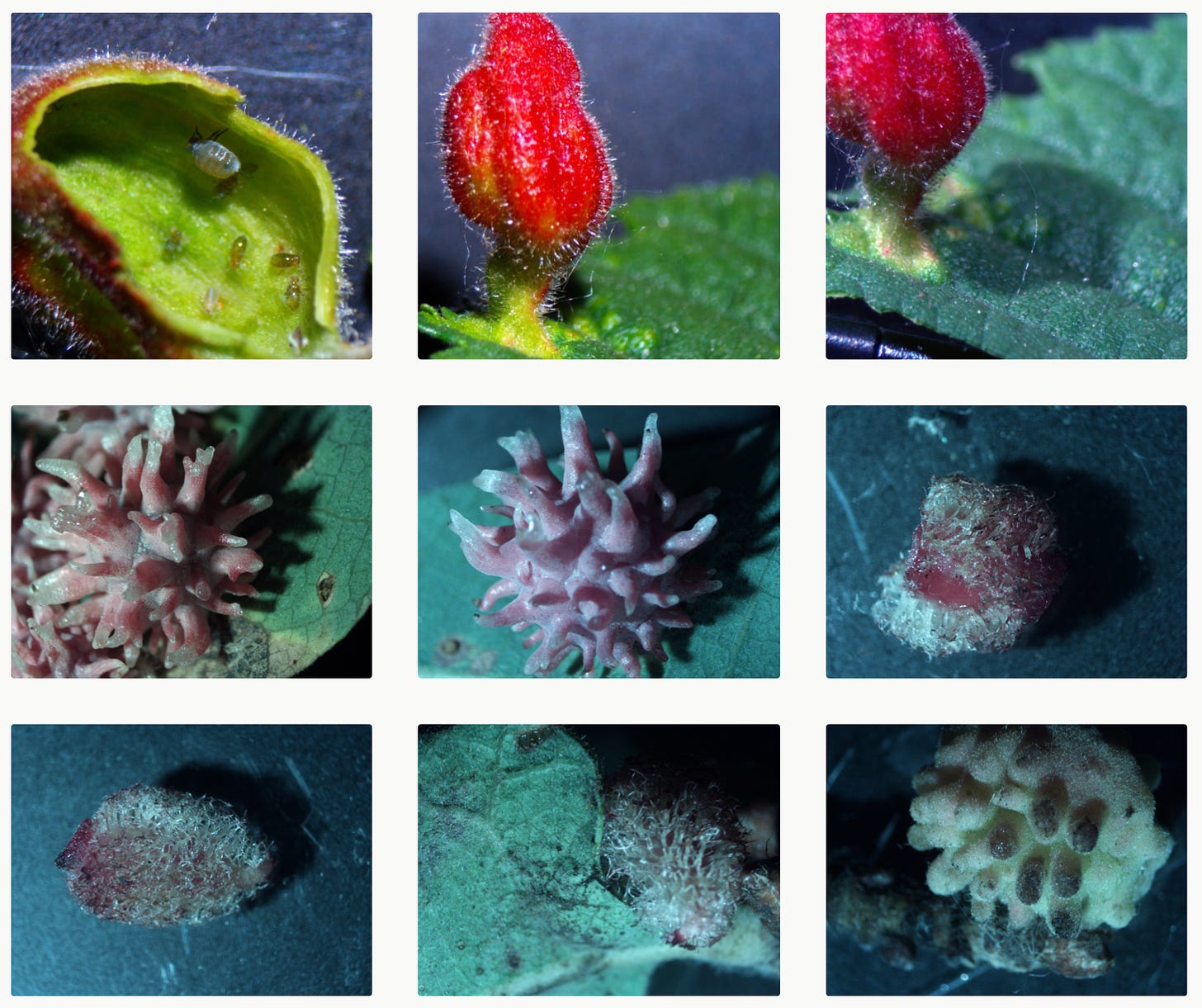

Another thing that I sometimes talk about is plant galls. So if you look at acorns and you look at the oak tree, the genomic relationship looks very reliable. You get lulled into this false sense of understanding what the oak genome does: it encodes flat, green leaves. But then here comes a bioengineer, which happens to be a wasp rather than a human, and it lays down some chemicals that signal the plant to build something it normally wouldn’t. The wasp is prompting or persuading the plant to go down a completely different morphological path where it builds all these crazy gall structures. Again, the wasp doesn’t build the structure itself; the plant does. If we had never observed this in nature, nobody in their right mind would predict that this is the outcome that the plant’s genome encodes, because the genome doesn’t tell you this information.

So my preliminary answer to your question is that everything is a combination of three pillars. The first pillar is the physical boundary conditions of the early embryo, the genome, and all that good stuff. But the second pillar is all the free affordances you get from Platonic space that you don’t have to evolve for. And then the third pillar is the agency of the system that actually solves problems using those tools — the thing that combines the hardware with the software, that navigates the space of possibilities in a way that is not well-described by the lowest levels of the cybernetic hierarchy (dumb machines that fall down gradients), but better described by higher levels of nested goal-seeking homeodynamic problem-solvers.

At the moment, everyone is fine with the first of those pillars, but almost everyone hates the second and third ones. I think they are critical and absolutely important. But anyway, that’s my story.

[You can read some of Levin’s writing on Platonic space here and here.]

Hammers And Chisels

GUNNAR: My experience reading your work and reactions surrounding it is that a huge swath of scientists are impressed with your research on an empirical level, but are strongly opposed to the way you think because it clashes with prior metaphysical commitments held by the field. It’s a problem I have become increasingly curious to understand. My own academic experience in psychology and neuroscience was filled with similar metaphysical “sacred cows” — assumptions we have that are not proven but which you are basically not allowed to question or contradict. A prime example is the assumption that intelligence and consciousness are wholly brain-generated phenomena. Integrated Information Theory, despite being a perfectly valid theory from a mathematical and conceptual standpoint, was pilloried by opponents in the field as “pseudoscience” because it opened the door to there being consciousness beyond the brain. I personally find this kind of metaphysical dogmatism in academia troubling, as it seems to hold back novel empirical research that might actually give us answers to these questions.

MICHAEL LEVIN: People often tell me in discussions and debates: “Look, those are just cells, they obviously don’t have any intelligence or agency. I have real intelligence.” And I’ll say, “Okay, let’s roll you backwards, because you know eventually we’re going to get to an unfertilized oocyte. So you tell me when exactly all this cool stuff — intelligence and agency and all the rest of it — goes from zero to one and actually shows up, and you tell me what special thing happens at that moment.” We have never found any sharp line like that, and so I think it is inevitable that we have to start thinking about these things as existing along a continuum.

I think one reason why we are so resistant to this is that scientists are still carrying this pressure that we had back in the 1600s or 1700s, when the dominant worldview was this magical idea that there’s a spirit under every rock, and they realized that we had to get away from it to be able to do rigorous experiments. Now, I think what happened after was that instead of simply saying “Okay, let’s do the experiments and find out which things actually do have a spirit in them,” instead, people said: “Nothing does! Just us!”

And I’m sure that was very expedient and maybe even necessary at the time, because people don’t do well with thinking in subtle gradations or from multiple perspectives at the same time. You really need to hit people between the eyes to trigger a paradigm shift. So fine, I’ll give them that — I’m sure it was a really hard time for scientists back then.

But look, we had to know at some level that things weren’t so binary. And I think some of the early guys did. I mean, Giordano Bruno and people like that seemed like they knew that things probably aren’t as simplistic as the way we think about them now. But they had to make these big leaps to shake things up. So I think one reason we’re in this position is that scientists are still worried about slipping back into a kind of mindless mysterianism. And my point is that it doesn’t have to be like that. We’re scientists, we don’t have to slide all the way down the slope to stick to what we’re good at, which is empirical experiments. The point is that we don’t have to cling to all these assumptions before we’ve done the experiments. And searching for cognitive competencies in cell biology (or anywhere else) is not mysterian, magical thinking because cognition is not some dark magic we dare not approach. We need to understand it, in all of its guises, within us and around us.

The other thing is that a lot of people, even the scientists who won’t admit it, are fundamentally still dualists. I have people emailing me constantly saying: “I read your paper, I now understand that I’m a walking bag of cells, and I’m completely depressed. What do I do now?” And my answer is always “Well, do whatever you were going to do before you read my paper. Don’t worry about it, it’s still fine!” But I think some people become really concerned by this kind of research because they have taken the notion of the world being made up of “dumb matter” too seriously. So when they learn that they are made of exactly the same matter as everything else, then they think they’re dumb too. And that’s a pill that nobody wants to swallow. People would rather think that there’s some sort of magical line dividing us from everything else. So even scientists really cling to this idea of a “sharp phase transition” that they can point to and say: “See, I’m a real thing, and all this other stuff is just dumb and mechanical.”

What’s funny is that I get yelled at from both sides. So the physicalists are like: “What are you doing? You’re bringing all this mystical cognitive-behavioral stuff into dumb matter! We don’t need it, we have chemistry.” And the organicists — who have been fighting this for hundreds of years — are also pissed off at me, because they’re saying: “You’re putting computers on the same spectrum as us! You’re undoing all this hard work. We’re trying to protect the majesty of living things from being reduced to the level of machines.” Organicists cling to this distinction of saying “the body is not a machine”. But if you go to an orthopedic surgeon, guess what he’s using? He’s got hammers, he’s got chisels. And if he doesn’t think your body’s a machine, go get a better orthopedic surgeon. You don’t want a psychoanalyst who thinks you’re a machine, but you definitely want an orthopedic surgeon who thinks you’re a machine. The key is that nothing is only one thing, nothing is what our formal models say it is, everything is observer-relative, and the choice of machine vs. majestic being is a false dichotomy.

I think that is what’s screwing everybody up. Everybody loves simple distinctions. Are you intelligent? Are you a machine? Are you an organism? Like, yeah, of course, I’m all of those things, it’s fine!

Not Like Us

GUNNAR: One central idea in your work is that mind scales as things move up and down levels of organization, say from molecules to cells to organisms. Each level up this scaling process can be viewed as constraining the levels below — so an organism has to constrain the cellular level, in a particular way, in order to persist as an organism; the cellular has to constrain the molecular level; and so on. But even among panpsychist and idealist scientists who think in this multi-scale fashion, it is often unpopular to believe that AI made in-silico and operating on binary code could gain this kind of naturalized intelligence — they believe artificial intelligence will always be an imitation, that it will never be like us.

MICHAEL LEVIN: I actually recently wrote an essay specifically on AI, published in Noema. One of my main points in that essay is that something being “like us” is not the critical factor for intelligence. There are diverse forms of intelligence, incredibly different kinds of minds. Just because you’re not “like us” doesn’t mean you’re not real, it doesn’t mean you are or aren’t dangerous, and it doesn’t mean that you are or aren’t worthy of moral consideration. Many things are not like us, and that’s okay.

So that’s one thing. The other thing is, when we say “AIs”, I think a lot of people have in mind today’s AI architectures. Today’s AIs are likely lacking the critical factors to make them true agents. Now, I will say, in case you’re gonna ask me what those are: I started writing a paper on this a while back, because I think we now know what at least some of those critical factors are — but I stopped. I stopped writing it because it hit me that to whatever extent I’m correct, somebody’s going to implement those, and I think you absolutely can implement them in other media. And if that happens in digital media, then we will have a trillion new beings that I have to worry about (not because we make minds, but because, given an appropriate interface, it’s apparently easy to facilitate ingression of patterns from the Platonic Space, and we have too many unanswered questions about what kind of patterns show up when). I’ve already got two kids, and I’m not interested in being responsible for more. So I’ve stopped, while I grapple with the implications of insights that make it too cheap and easy to enable significant minds with first-person experience. Somebody else will do it, I’m sure, but for now, I’m not gonna be the one to do it. I’m still wrestling with this.

GUNNAR: Well, that’s fascinating and terrifying… Do you believe other people are close to outlining the same critical factors as you were planning to publish?

MICHAEL LEVIN: I know somebody who’s come very close, and I think he will probably do it. And I’m not saying we have all the factors, but I think we’re quite close.

GUNNAR: Is your fear that, if you pulled the trigger and published this, those critical factors would actually be very easily implemented in different substrates?

MICHAEL LEVIN: Yes, I think so. Of course, I wouldn’t say it will be “easy” like trivial, but “easy” as in completely doable.

People are so focused on today’s AIs, but those models look like they are trapped in a box. And so it’s easy for us to look at an AI chatbot and think that’s not “real” intelligence, not “grounded in real-world experience”. But what are you going to do when there are people who have AI-powered chips interfacing with their own brains? What if one day I have a brain that is 50% silicon — are you going to tell me I’m “not real”? Does it have to be 84% before you’re not considered “real” anymore? People really need to understand that these large language models are not the main problem. We, and certainly our children, will be living with an incredible diversity of hybrid beings. All these ancient heuristics we have for judging the intelligence and “realness” of things — like what do you look like and how did you get here (meaning: what kind of hardware are you composed of, and were you engineered or naturally evolved?) — none of that is going to fly anymore. That kind of thinking will become completely irrelevant very soon, and we need new principles to deal with it.

See, what I find super weird with how most people think about this stuff is that it’s almost like a religion. Somebody will say: “That’s a machine. It was made by an engineer. I’m real!” Okay, so let me try to understand what you’re saying… You’re saying that the mutation and selection forces, all these random meanderings of the cosmic rays that are hitting your DNA, the ones that gave you an optic nerve that goes the wrong way out of your retina so that you have a big blind spot that your brain has to fill in, the ones that gave you all your susceptibility to bacteria, lower back pain, and all this other junk, you’re telling me that this process has a monopoly on making minds? Rational engineers with the ability to figure out what they’re doing couldn’t possibly achieve it? And by the way, those same engineers can use evolutionary search when they want to.

People have this weird religious belief that only the process of evolution and selection can create minds — and I seriously don’t get it. I understand that in the pre-scientific days, you’d have said: “Only God makes minds.” I get that. But some people have just substituted God with evolution, and as a foundational assumption, it’s just as limiting. No law of nature tells us that only evolution can do it. I think we need to come to terms with the fact that we have a strong mind-blindness, and I believe the field of diverse intelligence is a cure for that. Lesson number one is to accept that not all minds will be “like us”.

Sentient Societies (And Squares)

GUNNAR: One of the possibilities I’ve been increasingly interested in after reading your work, as well as the work of some of your colleagues, is the idea that mind and cognition continue to scale beyond the human level. Your work, as well as that of Karl Friston and others, implies that cognition scales up from the bottom level all the way up to the scale we exist at. So you can imagine particles as minimally cognitive entities that scale to create atoms, which scale to create molecules, then cells, then organisms. And if this principle is boundless — which we don’t know, but it’s a hypothesis — then you could imagine that societies and ecosystems are themselves cognitive entities in their own right. In the same way that an organism has goals, preferences, and memories that are inaccessible to any single cell, a society may have a form of cognition that is inaccessible to any of the individuals that make up that society. Does that idea have any merit for you?

MICHAEL LEVIN: I can’t give you a yes or no answer, because you need to do experiments, and nobody has thus far figured out how to conduct an experiment like that. However, it is interesting to notice that we already kind of talk as if that is true. So, for example, we’ll say things like: “I got a letter from the town” or “from the council” or “from the state”, right? Well, who is this entity? It’s not the lady behind the glass who hands you a parking ticket or the guy who inspects your apartment. All I know for certain is that we cannot conclude scientifically that societies and communities are cognitive entities from the armchair — it has to be experimental. So can you train your town? Maybe you can. Does your town have associative memory? Maybe it does. Does the weather? Does the solar system? Maybe, maybe not. You have to do the experiments. It’s an empirical question.

GUNNAR: This is another question I’ve been interested in, whether having a “science of higher scales” is possible — and if so, what would that look like? Because if you look at where science has been most successful from this multi-scale lens, then what you find is that science is most successful when it is aimed at scales below us. We understand particles, atoms, molecules, cells, and objects made of these things better than we understand societies, economies, biospheres, and so on. The higher up the ladder you climb, the more difficult it becomes to do rigorous empirical experiments. Sociology, economics, and political science, for example, are often lumped together under “soft science” for this reason. And as you’ve pointed out, if we view cells as cognitive entities, it is unimaginable that an individual cell could ever fully understand that it is part of this higher-scale system that is an organism. Is there any reason to suspect that we humans are in the same situation, that no individual human could ever possibly understand a “higher” cognitive entity that it is itself embedded in? Or, could we have reached a privileged position where having a science of higher scales is possible?

MICHAEL LEVIN: I think we absolutely can have a science of it, and I think we must have a science of it. I can’t prove this, but I suspect that there’s some sort of Gödel limit around knowing when you’re a part of a larger system and what its goals are. Probably, you can never really know for sure. But I definitely think you can have a science that gives you some guidance and allows you to gather evidence.

On the other hand, I want to tell you two quick stories that kind of illustrate these ideas. Does “Jeremy the snail” ring a bell at all? So around 2016 or so, a colleague of mine who studies left-right asymmetry discovered a snail with a shell that coiled the wrong way (which is a rare trait). Because this is very useful for understanding genetics and development, he wanted to find a mate for this snail, which he named Jeremy. So he basically created a whole campaign around finding a mate with the same shell asymmetry as Jeremy the snail, so that they could breed more snails with the same type of shell. He goes and puts these ads online, and everybody thought this was adorable. Some newspapers pick it up, and it goes kind of viral. And eventually they actually find the right freaking snail, I believe it was in Spain? They bring the two snails together, and happy ever after, right?

But now, just stop and think of everything that came together at a high-scale level to make this happen: all this human knowledge about genetics, left-right asymmetry, the internet, snail restaurants. One day in the future of biomedicine, there may be human patients given medical treatment based on information that was discovered through this process (the left-right chirality of cells and bodies is an important medical topic). The mission of finding a mate for Jeremy the snail might have all these consequences through the annals of biology and human civilization forever. But try to imagine how much of this Jeremy the snail could possibly comprehend. Imagine if he knew that his personal love life was at the center of this massive planetary drama. Jeremy knew nothing other than that he got an awesome new girlfriend. That’s all Jeremy knew. He has absolutely no hope of knowing all that went on for him to meet his mate. But let’s say Jeremy did some experiments. Maybe he could have received some evidence that there was something bigger going on, but I don’t think he could have had a prayer in hell of understanding why these crazy aliens were interested in his love life.

If you extrapolate that, we may imagine that we can gather evidence of patterns that hint at something “bigger” going on around us, and I would hypothesize that those patterns would look like what Carl Jung called synchronicity. We would experience evidence of global patterns that cannot easily be understood reductively. And so I’m open to this, but I don’t think we could ever prove specific instances with certainty.

The other story I find helpful in thinking about this is a science fiction story. So imagine there are these creatures that live deep in the bowels of the Earth, and because of that, they are super dense. And one day, they come up to the surface, and because they are so incredibly dense and their perception is based on gamma rays, and so on, everything on the surface just looks like gas to them. Everything we experience as solid and tangible, they perceive as an ethereal plasma around the Earth. But one of these creatures is a scientist, and he says to the others: “I’ve been doing some experiments tracking the patterns in this gas, and I’ve got this crazy idea that they might actually be cognitive agents.” And of course, all the other creatures say: “You’re out of your mind. This stuff is just gas, they couldn’t possibly be doing anything agential. Not like us, we’re real intelligent creatures!” And then another one says: “Uh, well, what’s the time scale of these patterns?” And the scientist says: “Well, about 100 years.” The others just laugh. “100 years?! You can’t do shit in 100 years. That’s nonsense. We live for millions of years. 100 years is nothing, it’s just a blip. You couldn’t possibly get anything intelligent accomplished in such a short amount of time. Forget it.”

And so the creatures go back down below the surface, thinking they found nothing. The moral is: who’s a pattern and who’s an agent, which is the data and which is the machine, who’s the thinker and what’s a thought – it’s all relative.

My suspicion is that we are in a situation just like that. We look around at the world, and we feel really certain that we know what is or isn’t real, cognitive, or intelligent. We have all these a priori philosophical commitments. We think that cognitive things have to be about medium-sized — preferably soft and squishy — and they have to exist within a particular temporal range. So when we look at particles or galactic patterns, we think “No, that’s too fast or too slow, that couldn’t possibly be a living, cognitive agent.” But I don’t think we can be certain of that until we do experiments to find out.

GUNNAR: In that sense, we humans are extremely lucky, because we have gained access to this thing called Science and Technology, which allows us to extend our intuitions through theoretical tools and measuring instruments. One example that I remember striking me when I was younger was when I first saw sped-up videos of plants. When you see a plant at your natural time-scale, they just look kind of inert, like they couldn’t possibly be intelligent, cognitive beings. But if you watch films of a plant’s lifespan at high speed, suddenly they look alive and agential, like they have some form of goal-directedness that you normally don’t notice.

MICHAEL LEVIN: Absolutely, and that example is also illustrative of what I’ve been saying: you cannot guess any of this from observational data. So even with videos of plants, you can’t say for sure whether you are seeing something that is cognitive or if it’s just a complex blind mechanism — unless you do perturbative experiments. This is really important. You can tell any story you want based on observational data. In 1944, Fritz Heider and Marianne Simmel showed people videos of circles, triangles, and squares moving around in particular ways, and participants would readily say things like “Oh, it’s obvious the triangle is in love with the square, and they’re trying to push out the circle.” So the point is, we can tell all kinds of crazy stories if all we do is observe. But you have to do experiments to find out things like: Can this system solve problems? Does it have a capacity for planning? Does it recognize itself? This is really the core of my argument. We have to stop settling for all these armchair assumptions and actually commit to doing the experiments of asking what kind of mind it might have, with what goals and in what spaces, how do we communicate with it, and what might we owe it.

Please check out Michael Levin’s personal Substack, where he often publishes articles about his lab’s work for a more general audience. See also his articles for Noema and Aeon, and his lab’s website.